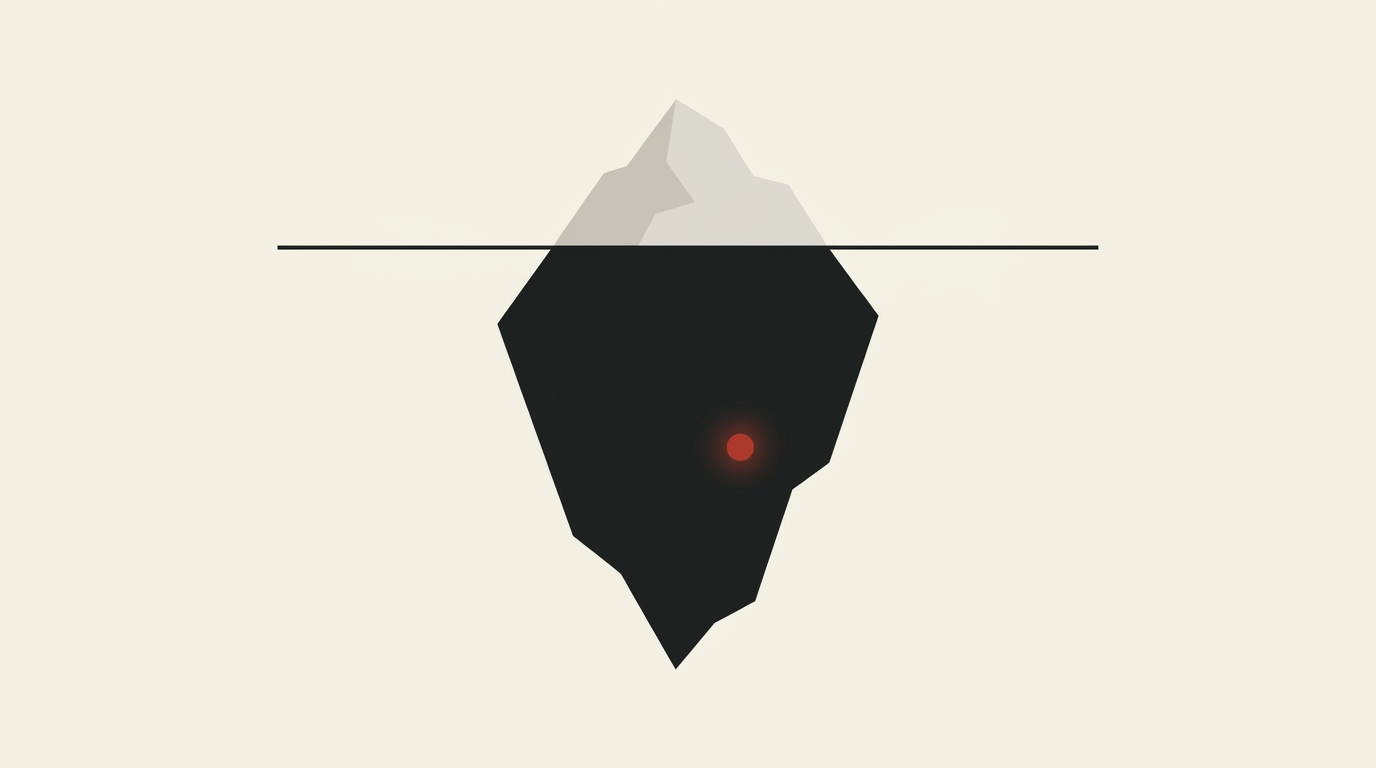

Shadow AI is your biggest security risk and your best innovation signal

I'm going to tell you something most business leaders probably already suspect but most haven't yet confirmed. At least half your engineering team is pasting code into AI tools you haven't approved. Your marketing team is drafting content with ChatGPT on personal accounts and your sales team is using Claude to write proposals. Your customer support team also discovered a new AI summarisation tool three months ago and never told anyone.

You haven't approved any of this and the scariest part is you might not even know it's happening.

A Panorays survey from January 2026 found that 49% of employees had adopted AI tools without their employer's approval. And the amazing (or terrifying) part: 69% of C-suite members had no problem with it. Yep, that's right, the executives are fine with it. The security team is somewhat blind to it and the dependency graph doesn't even account for it. This my friends is shadow AI, and it's growing faster than any official AI initiative your company has ever launched.

Banning AI tools is prohibition, and no doubt will work about as well

I've seen plenty of companies try the ban approach. "No unapproved AI tools." It's a sensible-sounding policy that fails for the same reason actual prohibition failed: the demand doesn't disappear, it just goes underground.

When you ban AI tools, engineers tend to switch from the company Slack to personal browsers. They use their phone to ask Claude about the bug they're stuck on. They copy code into ChatGPT on their personal laptop at home and bring the result back to work the next morning. You've achieved the worst of all worlds: the AI usage continues, but now it's completely invisible, running through personal accounts with no audit trail, and any data that touches those tools is now beyond your reach forever.

The smarter companies I see have taken the opposite approach. Instead of banning, they channel. They give people an approved way to use AI tools that's easier than the shadow alternative, and therefore most of them will use it. Not because they're obedient, but because they're lazy in a good way. The path of least resistance always wins. Always.

The security risk is genuinely scary though

I'm not minimising the danger here. Shadow AI is genuinely dangerous.

Most enterprise AI tools have data processing agreements, audit logs, and contractual commitments about how your company's data is handled. When your team uses personal ChatGPT accounts, none of those protections exist. That customer data in the support ticket just went to OpenAI's consumer training pipeline. That proprietary algorithm your engineer pasted in for debugging help now lives in a model that serves your competitors. Sleep well tonight.

I covered Anthropic's double breach a couple of weeks ago. Both the Mythos leak and the Claude Code source leak were caused by human error, not external attacks. If the company that literally built its entire brand on being the "safety-first" AI lab can't prevent basic operational security failures, what makes you think your team's ad-hoc AI usage on personal accounts is ever going to be any safer?

Gartner predicts AI supply chain attacks will be a top-five attack vector by 2026. One in eight companies has already reported an AI breach linked to agentic systems. Malware in public model repositories is currently the leading source at 35%. This isn't a future risk to plan for. It's a current one to deal with. Now.

But here's the bit nobody sees while everybody is panicking about policy

Shadow AI adoption is also the single most valuable signal your company has about where AI creates real value.

Just think about it for a second. Nobody forced your team to use these tools. Nobody incentivised them. Nobody ran a workshop (thank god!) or sent an email. Your people took their own time to find these AI tools, they learned them on their own initiative, and integrated them into their daily workflows because the tools really helped them do their jobs better.

That's as organic a product-market fit you'll ever find. And it's also the data that every AI strategy should be built on.

When you discover that your entire frontend team has been using Cursor without approval, you've really just learned that AI-assisted coding has genuine workflow value for frontend development at your company. When you find out marketing has been drafting everything public facing in Claude, you've just learned that AI writing assistance saves your content team many, many measurable hours a week.

The shadow AI audit shouldn't just be a security exercise. It should be your AI strategy's first and most honest research phase.

So, how do you run an audit without terrifying everyone...

Here's a practical framework for discovering what your team is using. Without judgement, no punishment. If you do make this punitive, people will just hide their usage and you'll learn nothing. Worse still, you'll lose their trust. Be a grown up about it instead.

The anonymous survey. Ask every team to list the AI tools they use, how often, and for what tasks. Make it properly anonymous. Be crystal clear that you're trying to understand where AI is adding value so you can invest properly. Frame it as "help us help you" because that's honestly what it is.

The network analysis. Your IT team can identify API calls to known AI providers (api.openai.com, api.anthropic.com, generativelanguage.googleapis.com) leaving from your corporate network. This won't catch personal device usage, but it catches the corporate device usage that you're responsible for and will give you a strong enough indication. You might be surprised just how chatty your network is with AI providers you've never approved.

The expense audit. Parse expense reports and corporate card statements for ChatGPT, Claude, Copilot, Jasper, Copy.ai, Cursor, and other AI tool subscriptions. You'll find individual subscriptions scattered across various teams that nobody ever centralised. I'll guarantee you're paying for at least three tools that do exactly the same thing.

The conversation. This is the most important one. Take what you've found and have honest conversations with your team leads. "We understand your team is using Claude to review code. That's great. Let's make it official, we'll get proper data handling in place, and fund it properly." Watch their shoulders drop with relief and the smile spread across their face.

A three-tier policy that people will follow

After the audit, you need a policy that people will follow, not a 40-pager that nobody reads. A framework that fits on one page and is just common sense.

Green tier: tools that are approved and funded. These are the official tools with enterprise agreements, data processing contracts, and audit trails. Use them freely for any work data. This is the tier you want everyone living in. Make sure it's good enough that nobody feels the need to go elsewhere.

Amber tier: approved with some restrictions. These tools can be used for non-sensitive work only. No customer data, no proprietary code, no financial information. Personal accounts are acceptable for general research and exploring ideas (Sometimes with AI tools the best results come from looking at how multiple tools approach the same problem). Think of this as the "please, use your common sense" tier.

Red tier: prohibited. Tools with known security risks, no data processing agreements, or that operate in jurisdictions with poor data protection/ethics/ideals. These get blocked at the network level where possible.

The key, and I cannot stress this enough, is making the green tier genuinely good. If the approved tools are worse than the shadow alternatives, people will keep using the shadow alternatives. Every single time. You can write all the policies you want. Human behaviour doesn't read policy documents and you should know that from how often you honestly read the Terms and Conditions or the Cookie policies we think are there to save us. Invest in the green tier. Make it easier to use than the unapproved option. That's really going to be your security strategy that works.

Turning discovery into your AI roadmap

The audit data tells you three things that should shape your entire AI strategy.

Where AI delivers immediate value. Tools people adopt voluntarily are the ones solving the real problems. These aren't theoretical use cases from a consultant's slide deck. These are real workflows used by real people in your company, valuable enough they sought them out on their own accord. Double down on these. Fund them. Scale them.

Where AI has unmet demand. If teams cobble together workarounds with consumer tools, there's probably an enterprise-grade solution out there that would work much better with proper data handling and integration. The shadow usage in your team is pointing you directly at the investment opportunity. Listen to it.

Where the risks are concentrated. The teams with the most shadow AI usage are the ones pushing the most data through uncontrolled channels. Prioritise getting them onto approved tools first. That's your risk-reduction roadmap.

Your AI strategy shouldn't start with a vendor evaluation or a workshop with Post-it notes on a wall (thank me later). It should start with understanding what your team has already discovered on their own. They've already done the research for you. All you have to do is ask...

This is part two of the Sustainable AI series. Next article: Your codebase wasn't built for AI. Here's how to bridge the gap.

The sharpest AI tools intel, weekly.

Join thousands of professionals navigating the AI tools landscape. Free, no spam, unsubscribe anytime.